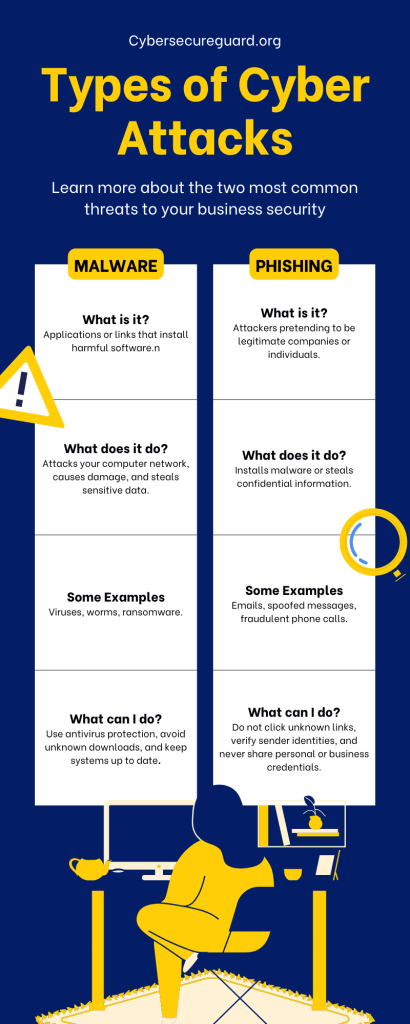

Think of a classic phishing email. Not too hard, right? You’re probably picturing a poorly written message full of spelling mistakes, an odd greeting like “Dear valued customer”, and a suspicious-looking sender such as info@amaz0n-support.com. For years, spotting these scams was almost effortless—a quick glance at the clumsy wording was usually enough to send it straight to the trash.

But those days are gone. Cybercriminals have leveled up in ways that most organizations are not prepared for. With the power of Artificial Intelligence, phishing emails are no longer clumsy or easy to dismiss. Instead, they’re polished, convincing, and often indistinguishable from legitimate business communication. No more obvious typos, no strange grammar—just clean, professional messages that can mimic the style of real companies, real colleagues, and real institutions with frightening accuracy.

This marks a genuine turning point in the evolution of digital threats. The very same AI technologies that bring us helpful chatbots, real-time translations, and creative writing tools are now being weaponized by attackers. The result is a new generation of phishing campaigns that combine psychological manipulation, data intelligence, and automation to target even the most cautious recipients—at scale, and at speed.

In this article, I’ll break down exactly why AI-generated phishing emails are so much harder to detect than anything we’ve seen before, and what that means for the way you and your Business need to think about security.

1. The Death of Typos: Flawless Language and Grammar

Not too long ago, spotting a phishing email was almost laughably easy. Awkward phrasing, endless typos, and broken grammar were dead giveaways. Lines like “Dear valuedest customer” or “You account has been suspend” practically screamed scam. Many recipients didn’t even need to finish reading—the sloppy language alone was enough to raise red flags.

Today, that safety net is gone. AI-powered language models such as ChatGPT, Google Gemini, or Claude have armed cybercriminals with the ability to produce messages that are grammatically perfect, tonally appropriate, and highly convincing. These tools don’t just avoid errors—they can mirror tone, jargon, and even the structural habits of specific organizations with unsettling precision.

That means an AI can draft an email in the dry, formal style of a bank, in the casual tone of a coworker, or as an urgent notice from your IT department demanding immediate action. Each version sounds authentic because the AI understands context and adapts its output accordingly.

Even more dangerous: AI isn’t limited to writing generic professional emails. It can replicate the specific communication style of real companies or real individuals. If an organization regularly uses certain phrases, formatting conventions, or even signature structures, AI can study and reproduce them almost perfectly. The result is a message that feels familiar, trustworthy, and far less likely to raise suspicion.

What’s particularly insidious is that this capability is available to virtually anyone. Attackers no longer need to be skilled writers or native speakers of a given language. A non-native English speaker can produce a flawlessly written email targeting an English-speaking company in seconds—with no technical expertise whatsoever.

This is where the real danger lies. Our first line of defense—the instinctive gut reaction when we scan an incoming message—has been severely weakened. Where once clumsy wording triggered instant doubt, we’re now faced with polished, natural-sounding text that feels entirely legitimate at first glance.

2. Hyper-Personalization at Scale

Phishing used to be a numbers game—a blunt “spray and pray” approach. Attackers would blast out tens of thousands of nearly identical, generic emails and simply hope that a small fraction of recipients would take the bait. The vast majority of people could dismiss these messages easily because they felt impersonal, vague, and out of context.

Artificial Intelligence has turned this model upside down. Instead of relying on volume alone, AI enables personalization at massive scale. By analyzing publicly available data—LinkedIn profiles, X (formerly Twitter) posts, press releases, company websites, conference attendee lists, job postings, and even podcast appearances—AI systems can generate emails that feel tailor-made for each individual recipient.

Imagine opening an email that:

- Uses your full name correctly, including proper spelling and title.

- References a recent corporate event your company hosted or attended.

- Mentions a shared professional connection: “I spoke with [actual colleague’s name] last week, who suggested I reach out to you directly…”

- Adopts the industry-specific jargon you use every day.

- References a project, product launch, or initiative that’s genuinely relevant to your role.

This level of customization dramatically increases the credibility of the message. It no longer looks like a random scam—it looks like a natural continuation of your real-world professional interactions. And because all of the information comes from publicly accessible sources, nothing in the email feels fabricated at first glance.

The power of AI lies in its ability to do this not just once, but thousands of times simultaneously. What used to be the painstaking work of a skilled social engineer—hours of research, manual crafting, individual sending—can now be fully automated. Each recipient gets an email that feels uniquely relevant, even though it was generated in bulk by an algorithm that spent seconds on each one.

And that’s the real threat. When a phishing attempt resonates personally—when it references your job title, your network, your current projects—it bypasses the natural skepticism we apply to unknown senders. The message doesn’t feel like spam. It feels like someone who actually knows you.

3. Context Awareness and Believable Narratives

Old-school phishing emails were notoriously clumsy in their setups. The classic “Your account has been suspended, click here to restore access” was so generic and out of context that most recipients recognized it as fraud almost immediately. The lack of a credible backstory was actually one of the strongest defenses users had—if the email didn’t make sense in the context of their day, they questioned it.

AI changes this dynamic entirely. Modern phishing campaigns can now generate plausible, multi-layered narratives that feel like a completely natural part of your workday. Instead of blunt scare tactics, the email arrives as an ordinary continuation of something you’re apparently already involved in.

Consider these examples:

Subject: Follow-up on yesterday’s Teams meeting — Q3 project planning

Body: Hi [Name], thanks for your time yesterday. As discussed, I’ve attached the revised version of the document with the changes we agreed on. Could you review the updates and send your feedback before 4 PM today? The client is waiting on our side.

On the surface, nothing feels unusual. The message references a meeting (a common daily occurrence), attaches a file (perfectly routine in professional communication), names a deadline (creating urgency), and even mentions a client (adding pressure). For a busy employee managing multiple projects, this doesn’t look like a threat—it looks like a to-do item.

The key danger here is contextual awareness. AI doesn’t just write clean sentences; it can embed messages into believable scenarios that map directly onto the workflows of modern organizations. Whether it’s referencing project reviews, financial approvals, HR onboarding, IT access requests, or invoice processing, the email is engineered to mirror the actual rhythms of how people work.

This is especially dangerous in larger organizations, where employees frequently receive requests from people they don’t know personally—from different departments, partner companies, or external vendors. In those environments, it’s entirely normal to process a request without picking up the phone to verify it first.

AI has effectively shifted phishing from the realm of “obviously fake” into the world of “perfectly plausible.” And plausible is far harder to defend against.

4. The Automation of Deception

In the past, sophisticated phishing required time, effort, and genuine expertise. A skilled attacker had to manually research targets, gather personal or corporate details, craft convincing messages, and send them individually. This meant that highly personalized, targeted phishing—often called spear-phishing—was generally reserved for high-value targets: executives, finance teams, or individuals with privileged system access.

AI has obliterated those limitations entirely. With today’s tools, a cybercriminal no longer needs hours of manual work or specialized knowledge. They can simply instruct an AI agent to:

- Scrape data from LinkedIn, company websites, social media, and news sources.

- Generate thousands of unique, personalized emails tailored to different recipients.

- Embed realistic narratives, fabricated deadlines, or plausible file attachments for added credibility.

- Dispatch those messages at scale—within minutes.

This automation dramatically lowers the barrier to entry. What once required technical skill, patience, and access to underground expertise can now be executed by almost anyone with an internet connection and access to AI tools. The criminal ecosystem doesn’t need highly skilled operators anymore. It needs orchestrators—people who know how to prompt AI systems to do the heavy lifting.

Worse still, automated systems don’t get tired, distracted, or sloppy. They can run continuously, refine messages to evade spam filters, adapt to individual responses, and even A/B test which narrative variants generate the highest click-through rates—essentially running a data-driven marketing campaign, but for fraud.

The outcome is a threat landscape where highly personalized, believable phishing is no longer the exception reserved for high-profile targets. It’s becoming the baseline. Any business, regardless of size or industry, can now be on the receiving end of an individually crafted, psychologically sophisticated attack. And most organizations are not staffed or trained to deal with that reality.

5. Multimodal Threats: More Than Just Text

Phishing has evolved far beyond the email inbox. With the rise of generative AI, attackers are no longer limited to written messages—they can now deploy multimodal attacks that combine email, voice, and even visual deception into one seamless, layered campaign.

Here’s how it looks in practice:

Voice Cloning (Vishing) Imagine receiving a highly convincing phishing email in the morning. A few hours later, you receive a phone call from what sounds exactly like your manager’s voice, following up on the email: “Hey, did you get what I sent this morning? I need that approved before end of day—it’s urgent.” With AI-powered voice synthesis, criminals can create realistic clones of familiar voices from just a few seconds of audio—often scraped from public sources like podcast appearances, YouTube interviews, or company presentations.

Fake Websites and Login Pages AI can generate pixel-perfect replicas of legitimate websites—complete with accurate fonts, branding, and layouts—in a matter of seconds. A link embedded in the email takes the recipient to a login page that is visually indistinguishable from the real thing. The moment they enter their credentials, those credentials are in the attacker’s hands.

Deepfakes and Synthetic Media Beyond emails and phone calls, AI can produce convincing fake video messages—a CEO delivering a briefing, a finance director approving an unusual wire transfer, or an IT manager instructing employees to reset their credentials via a specific link. These synthetic videos, while not always perfect, are convincing enough when viewed quickly by a recipient who has no reason to suspect fraud.

This convergence of channels creates what security professionals call layered attacks—campaigns that feel authentic from every angle simultaneously. Where traditional phishing relied on a single deceptive message, AI-enabled phishing can orchestrate an entire reality across email, phone, and web, leaving the victim little room for doubt.

The implication for businesses is significant: it’s no longer sufficient to train employees to spot suspicious emails. They also need to verify unusual phone calls, question unexpected video communications, and know what to do when something feels off—even if it looks and sounds completely real.

The New Rules of Vigilance

Since the old warning signs—bad grammar, odd phrasing, clumsy design—are rapidly disappearing, defending yourself and your organization requires a fundamentally updated approach.

Distrust Perfection A flawless, well-written email is no longer automatically trustworthy. Be especially cautious when a message looks too polished, too urgent, or too convenient. Perfection should prompt questions, not reassurance.

Verify the Sender Carefully Hover over the sender’s name to check the full email address before doing anything else. An email from Microsoft Support will never originate from service@microsoft-security.xyz.com. Attackers register lookalike domains specifically to pass a casual glance. Pay attention to every character.

Slow Down Deliberately Urgency is still the attacker’s most reliable tool. Artificial deadlines—“respond within the hour”, “action required today”—are designed to override your judgment. When you feel rushed, that’s precisely the moment to pause. Verify first, act second.

Verify Through Independent Channels Never click a link in a suspicious email to check whether it’s legitimate. If you need to log in somewhere, type the URL directly into your browser or use the official app. If a request appears to come from a colleague, call them directly or send a new message through a known, trusted channel—don’t reply to the suspicious email itself.

Enable Multi-Factor Authentication (MFA) MFA remains one of the most effective safeguards available. Even if an attacker successfully obtains your password through a phishing attack, MFA creates a second barrier that significantly limits their ability to gain access. It’s not a perfect defense, but it dramatically reduces the damage a successful credential theft can cause.

Invest in Awareness Training—Ongoing, Not One-Off A single annual security training session is no longer sufficient. The threat landscape changes continuously, and so must your organization’s awareness of it. Regular, scenario-based training that incorporates current attack techniques—including AI-generated phishing examples—is far more effective than static, once-a-year compliance exercises.

Conclusion: How to Detect AI-Generated Phishing Emails

AI has made phishing emails much more advanced. In the past, they were easy to spot because of bad grammar or strange messages. Today, phishing emails can look perfect, personal, and very realistic. Even experienced people can be fooled. Because of this, the old warning signs are not enough anymore. You need a new way of thinking. Be careful, question what you see, and always take a moment to check if something is real.

Do not trust emails just because they look professional. Always check the sender. Be careful with urgent messages that try to pressure you. If something feels unusual, confirm it through another channel, like a phone call. Use multi-factor authentication to protect your accounts. And make sure your team understands these risks. AI is making attacks stronger, but you can stay safe. The best protection is a mix of awareness, good habits, and simple security tools. Stay alert—and you will stay in control.

Executive Security Briefing – Slack Channel

If you prefer ongoing exchange instead of one-way updates, you are welcome to join my Slack workspace. This is a focused space for business owners, consultants, and decision-makers who take cybersecurity seriously and want practical, real-world discussions — without noise or marketing pressure.

Inside the community, I share:

• Current threat insights

• Practical security recommendations

• Risk discussions based on real scenarios

• Direct access for professional exchange

The workspace is intentionally small and curated to ensure quality conversations. For founders, consultants, and decision-makers who prefer structured exchange over passive reading, this private Slack channel serves as a focused briefing environment.

I recommend you to read the following articels

Can a PDF File Be Malware? The Hidden Dangers You Need to Know

Examples of Phishing Attacks on Small Businesses — And How to Detect Them Early

Exposing phishing emails: How to recognize fraud attempts – safely and systematically

The Components of an Attack: The 7 Phases of a Modern Phishing Attempt

Why a Fake Invoice Can Ruin Your Business – and How to Prevent It